Semantic Search Explained: Writing Content for Machines That Think Like Humans

Jared Bell

Project Manager

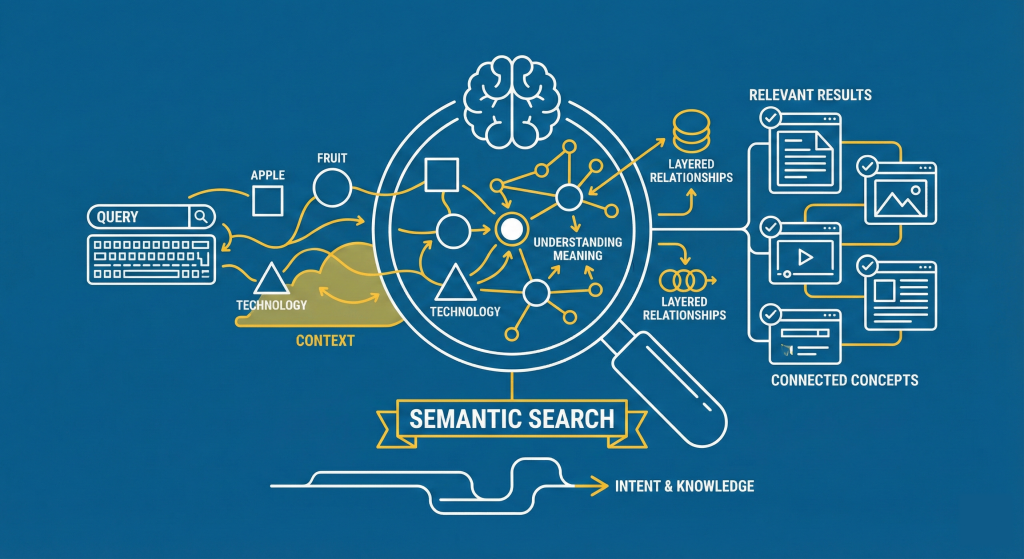

Semantic Search is an advanced search technology that focuses on understanding the meaning and intent behind a user’s query, rather than simply matching keywords. It uses Natural Language Processing (NLP) and entities (concepts, people, places) to process information. For modern SEO, this means content must move beyond keywords to establish topical authority and directly answer the underlying questions that Google’s AI is looking for to surface in AI Overviews and other semantic results.

1. What is Semantic Search? (And Why Can’t Google Read Your Mind?)

Semantic search is a data retrieval technique that interprets the contextual meaning of a query, allowing search engines to return relevant results even if they don’t contain the exact keywords typed by the user.

For decades, search was primitive. If you searched for a single keyword, Google delivered pages containing that exact keyword. The shift to semantic search is about making the machine understand intent.

The ultimate goal of semantic search is to bridge the gap between how humans speak (with context and nuance) and how machines process data by mapping concepts, entities, and user intent together.

| Old Search (Lexical) | New Search (Semantic) |

|---|---|

| Focus: Keyword density and exact matching. | Focus: Meaning, context, and topical authority. |

| Result: Pages with the exact phrase “best apple computer.” | Result: Pages about MacBook Pro or iMac (understanding Apple Inc. is the entity). |

Understanding search intent is a key part of semantic search. Google categorizes queries into informational, transactional, navigational, and commercial investigation. Structuring your content around the dominant intent of a query helps the algorithm match your page to both the user’s goals and the semantic context.

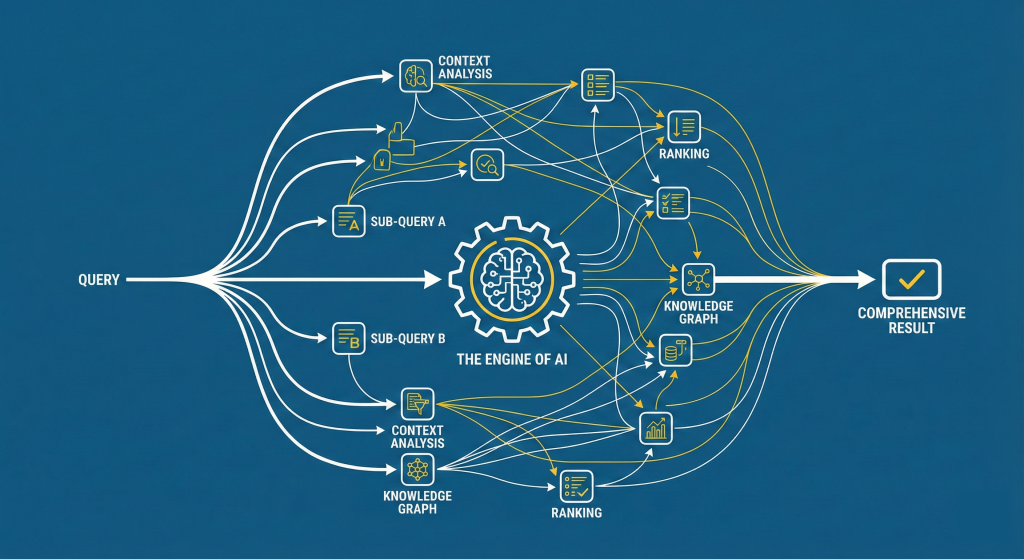

2. The Engine of AI: How Google Uses Query Fan-Out

To fully grasp semantic search, you need to understand the technical process that makes it work: Query Fan-Out.

What is the Google Query Fan-Out?

Query Fan-Out is the mechanism where Google’s AI takes a single, complex user query and immediately breaks it down into multiple, discrete sub-queries (“fanning out” the intent) to synthesize a single, comprehensive answer.

Think of a complex query like: “What is the best type of insulation for a garage in a cold climate?”

Instead of just searching that one phrase, the AI’s Fan-Out process generates these hidden sub-queries:

- “R-value required for garage insulation.”

- “Difference between spray foam and fiberglass for cold weather.”

- “Vapor barrier requirements for a garage in a cold climate.”

- “Energy efficiency considerations for garage insulation in winter.”

Fan-out queries often include comparisons, cost questions, regional considerations, common mistakes, and step-by-step processes. Including these variations in your content ensures you address not just the main query but the refined intent Google generates.

The Content Problem Solved by Fan-Out

If your article only answers the main query, you miss all the valuable sub-queries generated by the Fan-Out. To win the semantic search game, your content must pre-emptively address the fan-out by covering the full breadth of the topic with clear entity relationships and structured explanations.

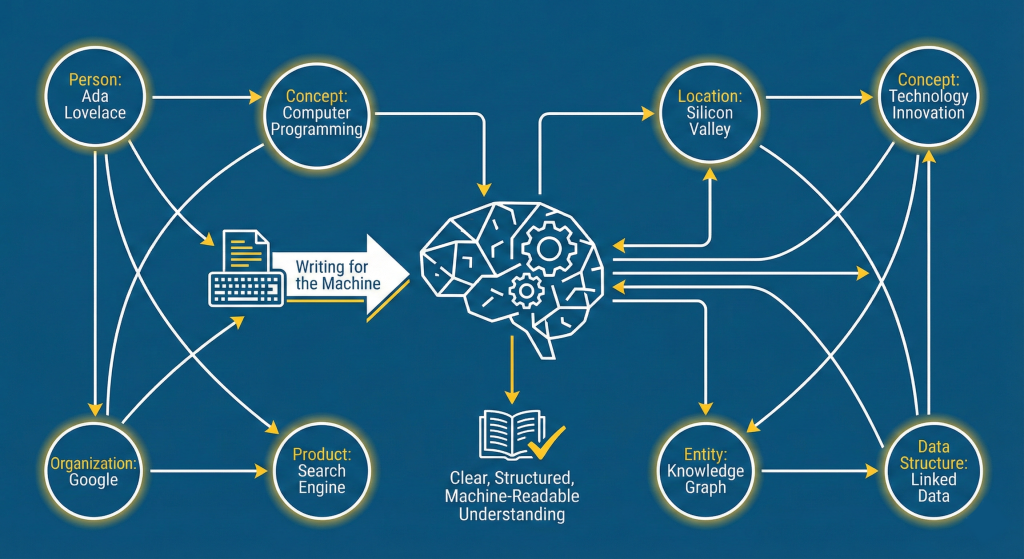

3. Writing for the Machine: The Power of Entities (Entity-Based SEO)

If Query Fan-Out is the engine, Entities are the fuel.

What are Entities in SEO?

An Entity is a distinct, well-defined thing or concept that Google recognizes and stores in its Knowledge Graph. Entities are the building blocks of semantic search.

An entity is unambiguous. The keyword “apple” is ambiguous (fruit? company?). The entity Apple Inc. is unambiguous. Your content needs to confirm which entities you are discussing and establish clear connections between them to support the Knowledge Graph’s understanding of your topic.

| Type of Entity | Example |

|---|---|

| Person | Elon Musk, Marilyn Monroe |

| Organization | Microsoft, The Federal Reserve |

| Concept | Zero-Click Search, Renewable Energy |

| Product | iPhone 15, Toyota Corolla |

These entities feed directly into Google’s Knowledge Graph. Optimizing for the Knowledge Graph means reinforcing relationships between entities through definitions, consistent naming, internal links, and contextual explanations. This helps Google confidently associate your content with the correct concepts.

How to Use Entities to Write Authority-Driven Content

To be recognized by the semantic web, your content strategy must shift:

- Focus on Topical Authority (Clusters): Stop writing 10 random articles on 10 different keywords. Instead, write 10 interlinked articles on the same core entity from different angles (a content cluster). This establishes your site as the authority on that specific topic.

- Use Descriptive Context: When you introduce a key entity, explicitly define its role. For example: “The BERT Model, Google’s key AI for Natural Language Processing (NLP), was instrumental in developing modern semantic search.”

- Utilize Internal and External Links: Link to authoritative sources (like Wikipedia or official sites) and your own related cluster pages. This validates the entity’s existence and builds a visible map of relationships for the machine to crawl while strengthening semantic relevance through anchor text and contextual placement.

Strengthening EEAT signals, such as expert bios, citations, and real-world experience, also reinforces your entity connections. Google uses EEAT to determine whether the author and site are authoritative sources within that entity cluster.

Unlike keyword density, entity density measures how thoroughly you cover all relevant concepts, attributes, and relationships tied to your topic. High entity density signals comprehensive topical coverage, which helps Google rank your content more confidently.

4. Technical Checklist: Formatting Content for LLM Citation

To maximize the chance of your content being cited in Google’s AI Overviews and by other Large Language Models (LLMs), your structure must be hyper-optimized.

The Answer-First Principle

Begin every H2 or H3 that is phrased as a question (like the headings in this post) with a direct, one-to-two-sentence answer immediately following the heading. This creates the perfect, self-contained block for an AI Overview snippet and increases your likelihood of ranking in People Also Ask (PAA) boxes.

Semantic HTML and Structured Data

- Use proper HTML tags: H1, H2, H3 must be nested logically and follow a hierarchy.

- Implement Schema Markup (especially FAQPage, Article, or HowTo schema). This is the clearest way to explicitly tell the search engine which entities, questions, and answers are on the page and reinforce topical relevance through machine-readable structure.

Structure for Scannability

- Use bulleted lists and numbered steps AI systems prioritize extracting information from these structured, easy-to-digest formats.

- Keep paragraphs concise (2–4 lines) to maintain a high readability score—a key signal of quality for machine consumption and for improving user engagement metrics like dwell time.

Incorporating semantically related terms and LSI keywords helps reinforce the contextual meaning of your topic. These naturally signal to search engines that your content is deeply tied to the primary entity and its subtopics.

LLMs extract information using patterns, semantic relationships, and structured cues. When your content uses clear headings, short paragraphs, and answer-first formatting, it becomes much easier for AI systems to parse and cite your content in generated summaries or search answers.

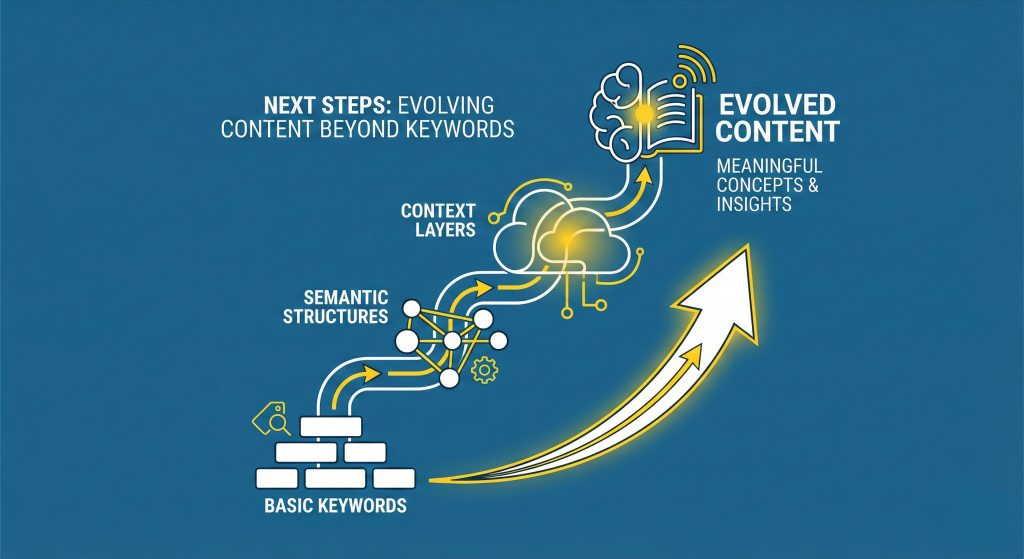

5. Next Steps: Evolving Content Beyond Keywords

The era of merely hitting a keyword density target is over. Semantic search and Query Fan-Out demand a strategic content shift:

- Stop writing for single keywords and start building content around core entities and topics. Every topic cluster should include structured internal linking – hub-to-spoke and spoke-to-spoke. This creates a semantic map that Google can easily crawl, strengthening the authority of your entire cluster.

- Map your topics to the questions Google’s AI is fanning out to cover the full user intent.

- Prioritize structural clarity (Answer-First writing, lists, and schema) to make your content the easiest choice for AI to cite across AI search, generative answers, and voice assistants.

You can also use tools like Google’s NLP API, Search Console, and schema validators to analyze how your content is interpreted by search engines. These tools help you refine entity clarity, structure, and semantic relationships.

Is your content ready for the era of AI Overviews? Contact SmartSites today for a Content Audit and a full semantic SEO roadmap.

Make sure your CTA aligns with user intent. Because semantic search often delivers informational traffic, offer audits, strategy guides, or deeper insights rather than hard sales messaging.

FAQs: Related Questions on Semantic SEO

Q: What is the main difference between NLP and Semantic Search?

A: Natural Language Processing (NLP) is the technology (the code and algorithms) that allows a machine to understand human language. Semantic Search is the application of that technology to a search engine to better interpret the user’s intent and context, and provide more accurate, entity-based results.

Q: Does schema markup help with entity-based SEO?

A: Yes, absolutely. Schema markup (structured data) acts as a translator, explicitly labeling the entities and relationships on your page for search engines. This is the clearest signal you can send to reinforce your entity connections and improve eligibility for enhanced SERP features.

Free

Consultation

Free

Consultation Free

Google Ads Audit

Free

Google Ads Audit